I’m a geek. I love tech. But I’m not an easy sell. The world is full of artificial intelligence that isn’t as intelligent as you’d think. I previously put the literary analysis website Who do you write like? to the test and found it could not accurately identify James Joyce as himself.

This month, I put two more literary tools under the spotlight—Fictionary and Autocrit. They’ve reinforced my view that, while algorithms are pretty good at copy-edit-level text analysis, they aren’t yet up to the job of structural analysis. I’m sticking with wetware for that task.

Fictionary

Fictionary is a web-based program that claims to provide a structural assessment of your book. According to its website it:

- Automates visualisation of your story arc

- Evaluates your story, scene-by-scene, against 38 story elements

- Guides you through an edit of plot, character, and scene

- Offers tips for rewrites

The service costs US$20 a month or $200 a year. I used the 14-day free trial.

Fictionary runs only on Google Chrome or Safari browsers and requires the Word docx format.

In my test, it felt buggy. The upload cut-off the first two chapters of my novel. This happened even on a second attempt. Manual corrections of some lists (such as characters) didn’t take.

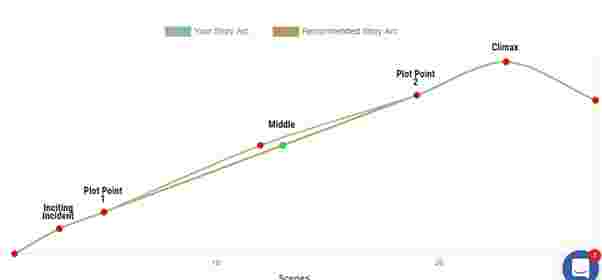

The programme provided this visual of the arc for my novel.

For comparison, this is the story arc Fictionary generated for Dickens’ A Christmas Carol.

Pretty much the same. The story arc is a template, rather than an analysis of the text.

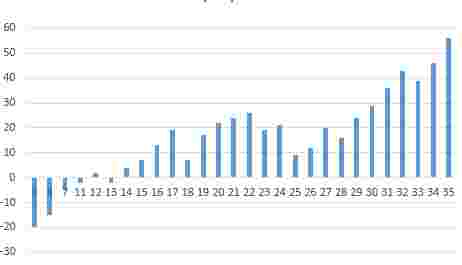

My own schematic of the chapters in my book looks nothing like this template arc. My cumulative diagram (below) is more like a roller coaster. It shows the number of stimulus/response pairs in each chapter (a proxy for dramatic intensity) and whether the protagonist’s emotion is positive or negative (a proxy for advances and setbacks).

This didn’t give me confidence that the algorithm was smart enough to parse my book. And it isn’t. The part indicated as the inciting incident isn’t the inciting incident and the climax isn’t the climax. You have to feed it with lots of coding. As you do so, the programme offers editing hints. These are all generic rules of thumb, rather than deep analysis of the text. Examples are:

- What is the purpose of this scene?

- What type of scene is this (dialogue, thought, description, action)? Variety is important

- Anchor the beginning of a new scene so the reader doesn’t get lost

- Provide a hook for the scene

You have to divide your manuscript into scenes, code each scene, pick out your characters from a list of proper nouns, and provide lots of other information. I wasn’t convinced that this was any advance on doing the analysis myself.

Autocrit

AutoCrit analyses your work to identify areas for improvement, including pacing and momentum, dialogue, strong writing, word choice and repetition. You can also compare your composition to that of popular authors.

This screenshot shows the summary for my book.

This score of 80.52 is described as being in the 75-85 territory of best sellers, perhaps a little hard to believe since I haven’t finished editing. George R R Martin’s Game of Thrones gets a tally of 79.99. However, Autocrit is measuring something, since a much earlier draft of my novel achieved lower at 71.39. Another novel, the one I’m most proud of, scored 84.96.

The indicators the program is using to create the scores are those in the bottom diagram: repetition, pacing, dialogue, word choice and “strong writing”. This latter category includes overuse of adverbs, consistency of tense, showing versus telling, clichés, redundancies, and filler words. Which means this isn’t really a structural analysis. It’s a copy editor with a beguiling summary screen.

Copy editors

If AI is not yet smart enough to perform a structural edit, it’s invaluable for the more mechanical copy editing process.

I’ve been using ProWritingAid since 2015 and I swear by it. ProWritingAid analyses your text and produces reports on areas such as overused words, writing style, sentence length, grammar and repeated words and phrases.

So, how does ProWritingAid compare with Autocrit?

- Autocrit ran significantly faster than ProWritingAid. The latter took two-and-a-half minutes to analyse 75,000 words, compared with around 30 seconds for Autocrit.

- Autocrit was not as effective at finding repeated phrases.

- Autocrit highlighted overuse of passive voice, whereas ProWritingAid found this to be well within target. On closer examination, Autocrit is using frequency of the verbs to be and to have as proxies for passive voice, and is thus less accurate here.

- Both programs flagged overuse of “filler” words. Autocrit found the main culprits to be “that”, “very”, “seem” and “really”. The list of overused words made editing easy and allowed me to bump up my style score in Autocrit from “too much” to “average”. But again, ProWritingAId is doing something more complex and my filler score dropped only slightly, from 47.1% to 46.8%. ProWritingAid suggests that no more than 40% of words should be “fillers”. In my defence, Shakespeare’s “to be or not to be” speech from Hamlet scores 53.4%.

- Autocrit gave me a pass for frequency of adverbs (which it measured at 11), whereas ProWritingAid flagged the 12 it found as borderline. Neither program states what an acceptable rate is, though the Hemingway program uses a threshold of under 1% of words.

- Both programs okayed my readability, though they produced different values for the Flesch reading ease score (83 in ProWriting Aid and 78 in Autocrit).

- Neither was very accurate at detecting show-versus-tell issues, which is unsurprising because word or sentence analysis is unlikely to be very sensitive on this problem.

Overall, the two programs have broadly similar features, though Autocrit is much more expensive. You get a year’s subscription to ProWriting Aid for the cost of two months with Autocrit.

The bottom-line verdict

- No algorithms are yet sophisticated enough, despite overblown marketing claims, to replace a human editor for structural edits.

- Copy editing of spelling, punctuation, grammar, and word-use can now be automated.

- Autocrit and ProWritingAId do a pretty good job of copy editing. Auotcrit’s uniqueness is the comparison with published fiction. ProWritingAid offers a more complex analysis and better value for money

Free Alternatives

Some of the features of the copy editing programs are available in free software. Grammarly and After the Deadline will review spelling and grammar. Hemingway will assess readability scores, and detect overuse of adverbs and passive voice.

Hi Neil,

I think we are a long way off the novel being produced by AI, I wonder what kind of book it might be. Where would the imagination for the story come from? Would there be an extensive learning database to compensation for human experience?

I used ProwritingAid with my novel MISSING – a genre specific pieced aimed at YA/adult women.

I was pleased with the Prowritingaid reports of word usage, style, and readability.

Before I felt confident using it, I did write lots of deliberate rubbish and also technical reports to see what the results were – mostly bad. However, I do find this software useful for confirming the grammar and alternative spellings.

I am not sure if I would spend my time loading information into the AutoCrit or Fictionary.

I suspect some politicians use the latter.

Regards,

James.

LikeLiked by 1 person

Thanks for that sharing of your experience, James. As I said, I swear by ProWritingAid. I find it great also for picking up words I use too often and repeat phrases.

LikeLiked by 1 person